Some have characterized the open standard, WebRTC (Web Real-Time Communication) technology as the gateway to a communication promised land that can connect people virtually anywhere in real time. Indeed, if you want to deliver real-time communications and low-latency video streaming in web browsers, WebRTC is currently your only widely supported option. The challenge has been,… Continue reading 3 Key Approaches for Scaling WebRTC: SFU, MCU, and XDN

Some have characterized the open standard, WebRTC (Web Real-Time Communication) technology as the gateway to a communication promised land that can connect people virtually anywhere in real time. Indeed, if you want to deliver real-time communications and low-latency video streaming in web browsers, WebRTC is currently your only widely supported option. The challenge has been, as developers in this space know only too well, that WebRTC, by itself, doesn’t scale. To truly scale WebRTC applications, you must leverage topologies designed to extend its capabilities. Each of these WebRTC topologies has its pros and cons, of course, and which is best for any given application depends on the anticipated use cases.

This post will examine the advantages and disadvantages of four WebRTC topologies designed to support low-latency video streaming in modern browsers: P2P, SFU, MCU, and XDN. Three of these attempt to resolve WebRTC’s scalability issues with varying results: SFU, MCU, and XDN. Only XDN, however, provides a new approach to allowing developers to deploy video-enriched experiences at scale that even the most experienced WebRTC gurus may be unfamiliar with. We’ll summarize the advantages and drawbacks of each topology to help you decide which is best for your WebRTC-based streaming application.

Peer to Peer (P2P)

P2P, or mesh, is the easiest to set up and most cost-effective architecture to use in a WebRTC application; it’s also the least scalable. In a mesh topology, two or more peers (clients) talk to each other directly or, when on opposite sides of a firewall, via a TURN server, which relays audio, video, and data streaming to them.

P2P WebRTC applications can be resource intensive because the burden of encoding and decoding streams is offloaded to each of the peers. This is why they perform best when you only have a small number of concurrent connections. Although you can achieve some level of scalability by configuring a P2P mesh network, you still end up with a resource-intensive and inefficient application. On the plus side, mesh provides the best end-to-end encryption because it doesn’t depend on a centralized server to encode/decode streams.

The only somewhat complicated aspect of a mesh architecture is the WebRTC signaling process connecting all the P2P devices together. However, discovering peers and connecting them is a requirement for all WebRTC-based applications (including the other architectures we discuss below). It’s also important to note that signaling, as this is called, was never a part of the WebRTC API. Thankfully, WHIP and WHEP (WebRTC-HTTP ingest and egress Protocols) are now available to address the need for a standard approach to signaling.

Advantages of P2P Architecture

- Easy to set up using a basic WebRTC implementation

- End-to-end encryption and better security

- Saves money and developer resources because it doesn’t require a media server

Drawbacks

- Supports only a small number of connections without a noticeable decline in streaming quality

- CPU intensive on devices because the processing of streams is offloaded to each peer

Selective Forwarding Unit (SFU)

SFU is perhaps the most popular architecture in modern WebRTC applications. Put simply, an SFU is a pass-through routing technology designed to offload some of the stream processing from the client to the server. Each participant sends their encrypted media streams once to a centralized WebRTC-compatible server, which then forwards those streams—without further processing—to the other participants. Although an SFU is more upload-efficient than a mesh topology—for example, on a call with n participants you have only one upstream per client rather than n–1 upstreams—WebRTC clients still have to decode and render audio and video media for multiple (n-1) downstreams which, as the number of WebRTC connections grows, will drain client resources and reduce video quality, thus limiting scalability.

The traditional SFU setup is also limited to running on a single media server, which limits the ability to scale to a very large number of connections. Developers are forced to compromise when they create their applications to limit the number of WebRTC connections in a session. To date, SFU is the most widely used WebRTC technology for video conferencing applications targeting modern browsers, which is also why most video conferencing solutions only allow up to a hundred or so users in a session.

Advantages of SFU Architecture

- Requires less upload bandwidth than a P2P mesh

- WebRTC streams are separate, so each can be rendered individually – allowing full control of the layout of streams on the client side

Drawbacks

- Limited scalability (cannot exceed the capacity of a single WebRTC media server)

- Higher operational costs as some CPU load is shifted to the server

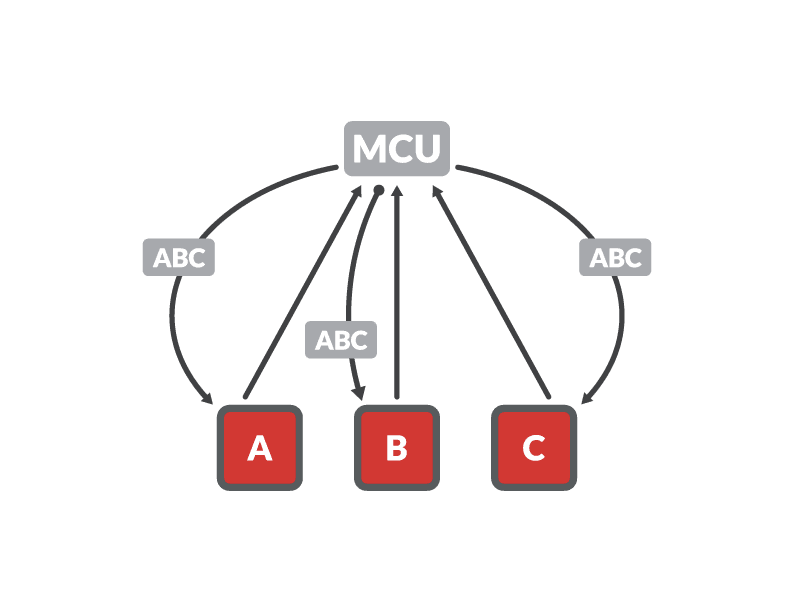

Multipoint Conferencing Unit (MCU)

MCU has been the backbone of large-group conferencing systems for many years. This is not surprising given its ability to deliver stable, low-bandwidth audio/video streaming by offloading much of the CPU-intensive stream processing from the client devices to a centralized server.

In an MCU topology, each of the client devices is connected to a centralized MCU server, which decodes, rescales, and mixes all incoming streams into a single new stream and then encodes and sends it to all clients. Although bandwidth-friendly and less CPU-intensive on the client side—instead of processing multiple streams, devices have to decode and render only one stream—an MCU solution is rather expensive on the server side. Transcoding multiple audio and video streams into a single media stream and then encoding it at multiple resolutions in real time is very CPU intensive, and the more clients connected to the server, the higher its CPU requirements.

Just as is the case with SFU, the MCU is limited to running on a single server, and this limits the ability to scale as well as to distribute the load across the world.

One of the greatest benefits of an MCU, however, is its ease of integration with external (legacy) business systems because it combines all incoming streams into a single, easy-to-consume outgoing stream. While WebRTC-based web applications are increasingly ubiquitous, having the ability to integrate with other already written systems is essential.

We should note upfront that a subset of the XDN architecture outlined below makes full use of the MCU via its mixer node, allowing developers to take full advantage of offloading the processing on the WebRTC-based native or web application.

Advantages of MCU Architecture

- Bandwidth friendly

- Composite output simplifies integration with external services (beyond WebRTC)

- Your only option when you need to combine many streams (unless you use an XDN approach, which we’ll discuss next)

Drawbacks

- CPU-intensive; a large number of video and audio streams requires a beefier server

- Single point-of-failure risk because of centralized processing

- High operational costs due to computational load on the server

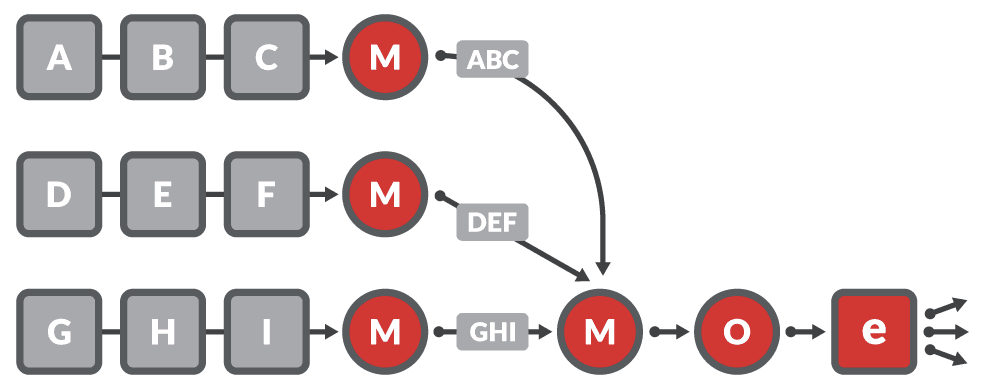

Experience Delivery Network (XDN)

XDN presents a new approach to extending the WebRTC open standard by combining elements of SFU and MCU. Unlike SFU and MCU, however, XDN uses a cloud-based clustering architecture rather than a centralized server to tackle WebRTC’s scalability issues. Each cluster consists of a system of distributed server instances, or nodes, and includes origin, relay, or edge nodes. Within this topology, any given origin node ingests incoming streams and communicates with multiple edge nodes to support thousands of participants. For larger deployments, origin nodes can stream to relay nodes, which in turn stream to multiple edge nodes to scale the cluster even further to realize virtually unlimited scale.

An XDN also supports so-called mixers that can be deployed between publishers and origin nodes, to combine many streams into a single stream that is then passed on to an origin node. A mixer is essentially an MCU that can be clustered in order to combine many more streams than a single-server MCU could handle.

The brains behind this operational cluster is a stream manager, which controls the nodes, performs load balancing, and replaces nodes should they fail for any reason. The stream manager is also responsible for connecting participants in a live-streaming event: it connects publishers to an origin node and subscribers to an edge node that is geographically closest to them.

XDN technology is designed to meet the demands of the next era of online engagement: video-enriched, interactive experiences that are bound to reshape our personal and commercial lives. It combines elements of SFU and MCU to deliver these video-rich streaming experiences at scale. For an example of how this topology can be deployed today, have a look at our Red5 Pro platform.

By default, Red5 Pro’s implementation of XDN uses an SFU mechanism for stream delivery. And, similar to MCU, it includes a low-level stream-processing engine. The server has full control over the media packets, which it can use to manipulate data as necessary before sending it out to the requesting WebRTC client.

Using this hybrid architecture, WebRTC applications can scale beyond any SFU- or MCU-driven multiparty conferencing applications. For example, Red5 Pro can support real-time, low-latency sports events with watch parties as well as other events that require real-time synchronization among any number of participants, such as auctions, live shopping, or sports betting.

Built upon a cross-cloud platform, Red5 Pro also supports a wide array of hosting options, including AWS, Azure, GCP, and DigitalOcean, as well as more than a dozen other IaaS providers using Terraform. Bare metal server installations can be integrated as well by installing a cloud-like API such as vSphere in a private data center. Having this variety of virtual server hosting platforms maximizes flexibility for full scalability and geographic dispersion.

Advantages

- Fully scalable WebRTC technology

- Flexible hosting with cross-cloud distribution

- Provides the best of both MCU and SFU in one scalable package

- Allows for future-focused user experiences

Drawbacks

- More complex than other real-time streaming topologies

Which WebRTC Architecture Is Right for You?

P2P, SFU, and MCU each have their advantages and drawbacks with regard to building low-latency, real-time communication for both current and future applications. P2P is optimal for simple video chat applications that require real-time communication between two to four concurrent participants. SFUs and MCUs address WebRTC’s scalability issues to a certain extent, facilitating real-time communication in more complex scenarios. An SFU is best utilized in multiparty conferencing applications that demand real-time interaction without too many concurrent participants. Using an MCU is crucial for large-scale conference applications, fan walls, and other use cases where a multitude of participant streams need to be rendered on a single WebRTC client.

Despite their popularity, none of these WebRTC architectures offer the flexibility and scalability of a hybrid cluster topology such as XDN, which supports real-time communication connectivity across any configuration of any number of concurrent users at any distance, ensuring seamless interactions regardless of the scale or complexity of the application.

To learn more about the unlimited scalability of WebRTC on the Red5 Pro XDN platform contact info@red5.net or set up a call to discuss your ideas. For our take on the inevitable shift to real-time video experiences, see our introduction to XDN.